Continuous Diffusion Rivals Discrete in Language Modeling

For the first time, continuous diffusion rivals discrete counterparts on standard language modeling benchmarks.

There is an old belief that continuous diffusion is born to be inferior to discrete diffusion in language modeling. Now, we are challenging it.

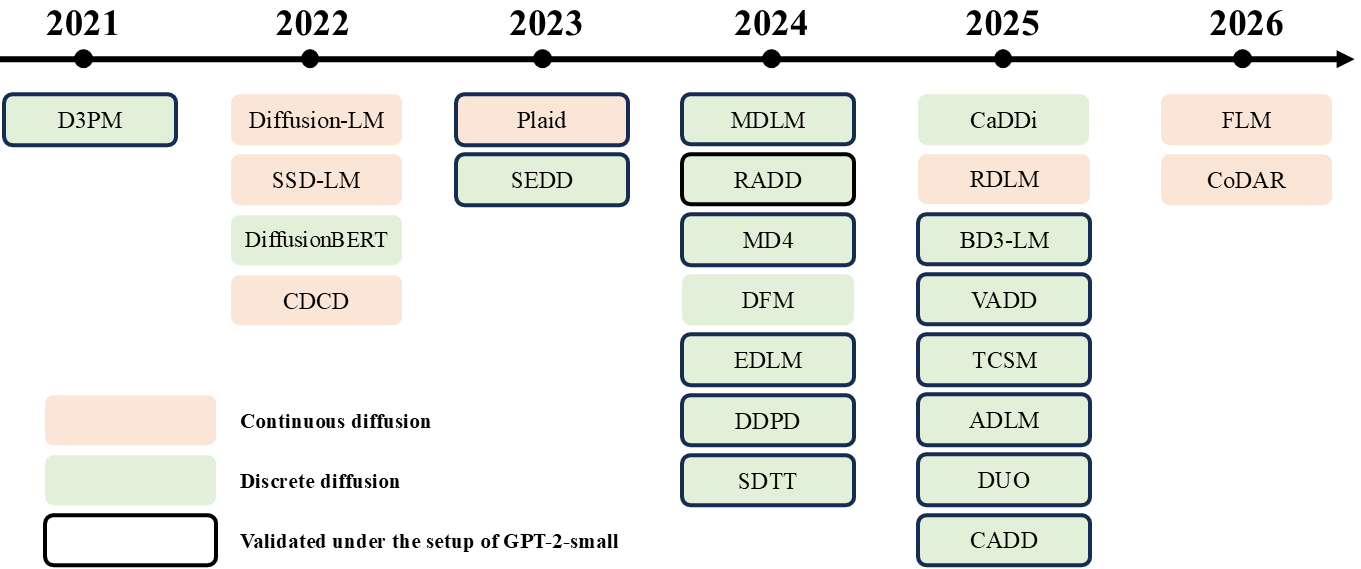

A brief history of diffusion language models

TL; DR: Performance of diffusion language models increases with autoregressive resemblance over years, with continuous diffusion being the earliest approach believed to be inferior.

Continuous diffusion has dominated the generation of images and video for years. A natural idea is to expand its domain to text generation, where early works made a lot of efforts during 2022-2023. However, the empirical result seems to only prove the inferiority of continuous diffusion in language modeling, especially under the scaled-up scenario. For example, Plaid, the only scaled-up continuous diffusion, requires 1B parameters to match the performance of autoregressive Transformer with ~100M parameters

The failure attempts of continuous diffusion language models nudged people to turn to discrete diffusion, which generates data from uniform states (random tokens) or absorbing (fully-masked) states. Not surprisingly, discrete diffusion was quickly proved to work better on discrete text data in 2024. By diffusing text from fully-masked states, SEDD

The evolution of diffusion language models up to mid-2025 can thus be summarized as:

A clear trend emerges: the more likely a diffusion behaves like autoregressive, the closer its performance gets to autoregressive’s. This has become a tenet in the industrial practice of diffusion language models, where people scale up block diffusion with causal attention, absorbing states, and blocksizes of 4-32

However, while the performance gap between masked diffusion and autoregressive Transformer is being narrowed, we also witness the loss of diffusion’s characteristics, which limits two original potentials of diffusion:

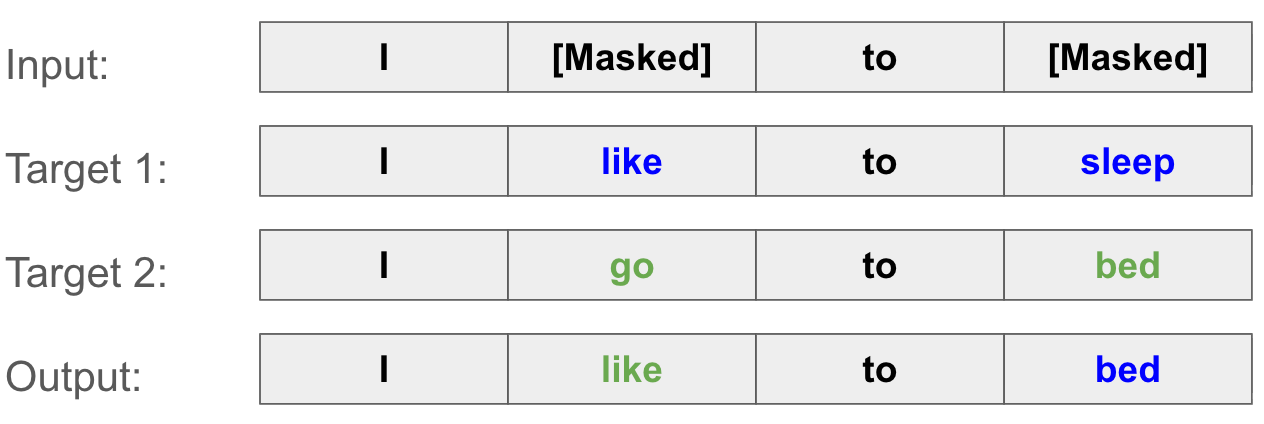

1) Few-step generation. In the image & video domain, continuous diffusion maps different noise points to different data samples, making one-step generation possible through learning this many-to-many mapping. Masked diffusion, however, has its prior distribution collapsed into one single fully masked state. Because of this, few-step generation of masked diffusion suffers from parallel decoding dilemma

2) Controllability. Image and video diffusion can be semantically controlled by manipulating continuous latents. For example, classifier-based and classifier-free guidance

The strengths and trade-offs of masked diffusion raise an important question: if diffusion models must increasingly mimic autoregressive models to perform well, what defines diffusion as an independent family of language models?

Frontiers in diffusion language models have taken action on this question in 2025: They traced back to architectures with dispersed priors, trying to get some lost diffusion characteristics back. One well-known attempt is DUO

In our recent work, we go backward a step further than DUO to try the canonical form of diffusion: continuous diffusion. We show that the previous underperformance of continuous diffusion language models is not intrinsic, but led by the underoptimization in their training recipes and evaluating protocols. With our optimization of 1) noise schedule, 2) self-conditioning, and 3) the NLL bound, continuous diffusion is able to rival discrete diffusion and even autoregressive under the setup and scale of GPT-2-small. Specifically, our method, LangFlow, beats autoregressive on 4 of 7 benchmarks of zero-shot PPL evaluation.

Next, we will explain how to achieve that.

Training Continuous Diffusion on Text

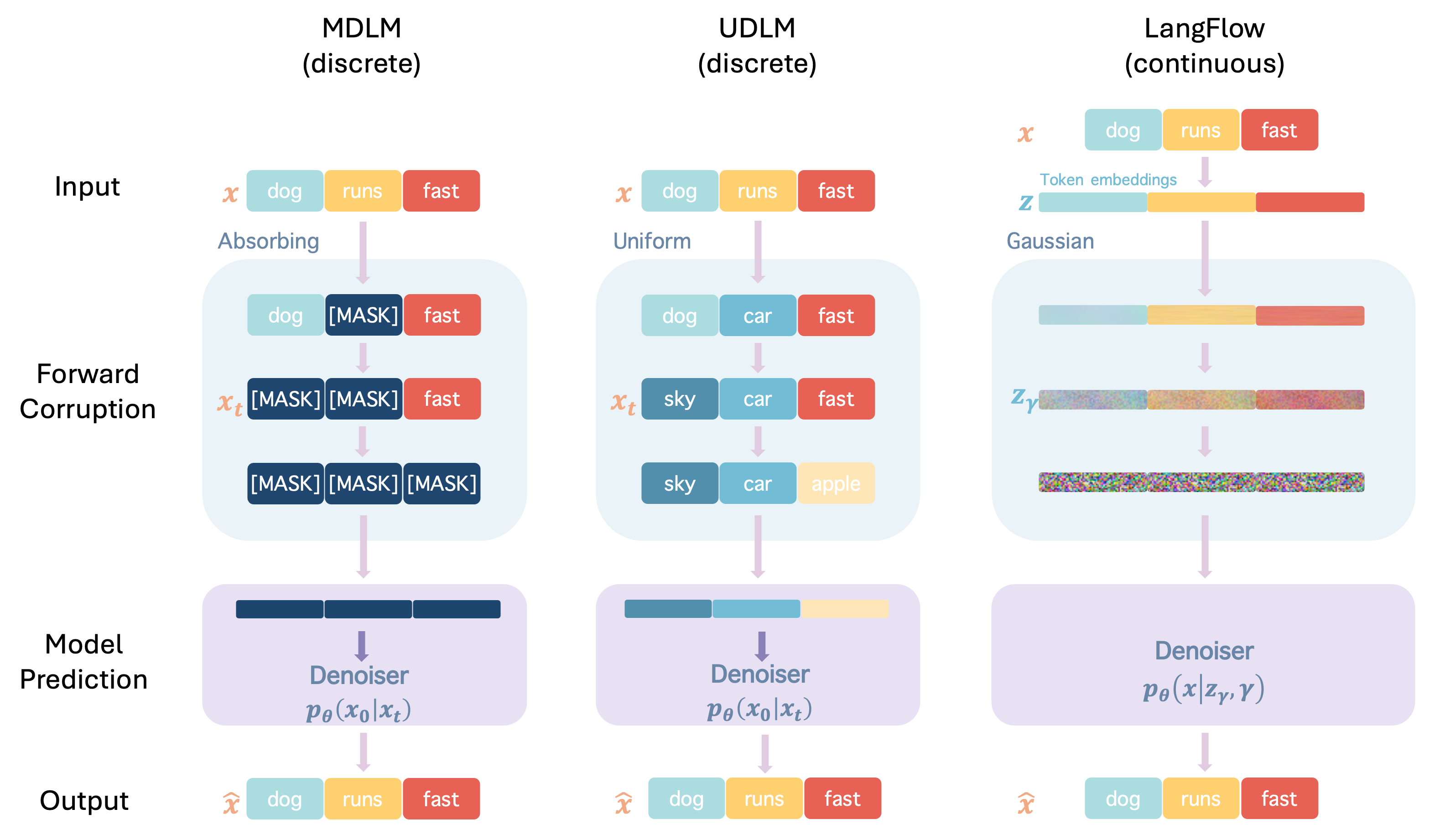

Continuous diffusion in the embedding space

TL; DR: Our model predicts clean logits from noisy text embeddings.

We begin by reframing embedding-space diffusion, a classical framework of continuous diffusion language models. Let \(\mathcal{E} \in \mathbb{R}^{V \times D}\) be an embedding matrix over a vocabulary of size \(V\), mapping each text token \(x^{(i)}\) to a \(D\)-dimensional vector \(\mathbf{e}_{x^{(i)}}\). A sequence of tokens \(\mathbf{x} = (x^{(1)}, \ldots, x^{(L)})\) is thus embedded into a continuous matrix \(\mathbf{z} = (\mathbf{e}_{x^{(1)}}, \ldots, \mathbf{e}_{x^{(L)}}) \in \mathbb{R}^{L \times D}\), and we define the generative target of the flow as this embedding \(\mathbf{z}\).

Flow Matching (FM)

where \(\alpha_t\) grows from \(0 \to 1\) and \(\sigma_t\) decays from \(1 \to 0\) as \(t\) goes from \(0\) to \(1\).

Training Rather than directly regressing on the velocity field, we train a model \(\hat{\mathbf{x}}_\theta^{(i)}(\mathbf{z}_t, t)\) to predict the probability distribution over tokens at each position \(i\). This is principled via Bregman divergence

Training by minimizing the expected Bregman divergence between the one-hot ground truth \(\mathbf{1}_{x^{(i)}}\) and the predicted probabilities \(\hat{\mathbf{x}}_\theta^{(i)}\) achieves posterior matching: the optimal predictor always recovers the true posterior \(p(x^{(i)} \mid \mathbf{z}_t)\), regardless of the specific choice of \(f\). Cross-entropy (CE) is the special case with \(f(\mathbf{p}) = \mathbf{p} \cdot \log \mathbf{p}\), where for one-hot \(\mathbf{p} = \mathbf{1}_{x^{(i)}}\) the divergence simply reduces to \(-\log \hat{x}_\theta^{(i, x^{(i)})}\). This yields the CE training objective:

\[\mathcal{L}_\text{CE}(\theta) = \int \lambda(t)\, \mathbb{E}\left[-\sum_{i=1}^L \log \hat{\mathbf{x}}_\theta^{(i, x^{(i)})}(\mathbf{z}_t, t)\right] dt.\]Here, \(\hat{\mathbf{x}}_\theta\) is parameterized by a diffusion transformer (DiT)

which we can use to obtain \(\mathbf{v}_\theta(\mathbf{z}_t, t)\) to progressively denoise \(z_t\).

The key insight of our training design is two-fold: First, optimizing the model by the cross-entropy loss avoids mode collapse. Different from latent diffusion for images, our embedding layer needs to be trained together with the model. Regressing the velocity directly can then converge trivially when embeddings for different tokens are the same, which leads to mode collapse. Second, under this design, our model is fed with (noisy) embeddings and predicts discrete token likelihoods, similar to discrete diffusion and autoregressive Transformer. This means that it can share the same network architecture with discrete diffusion

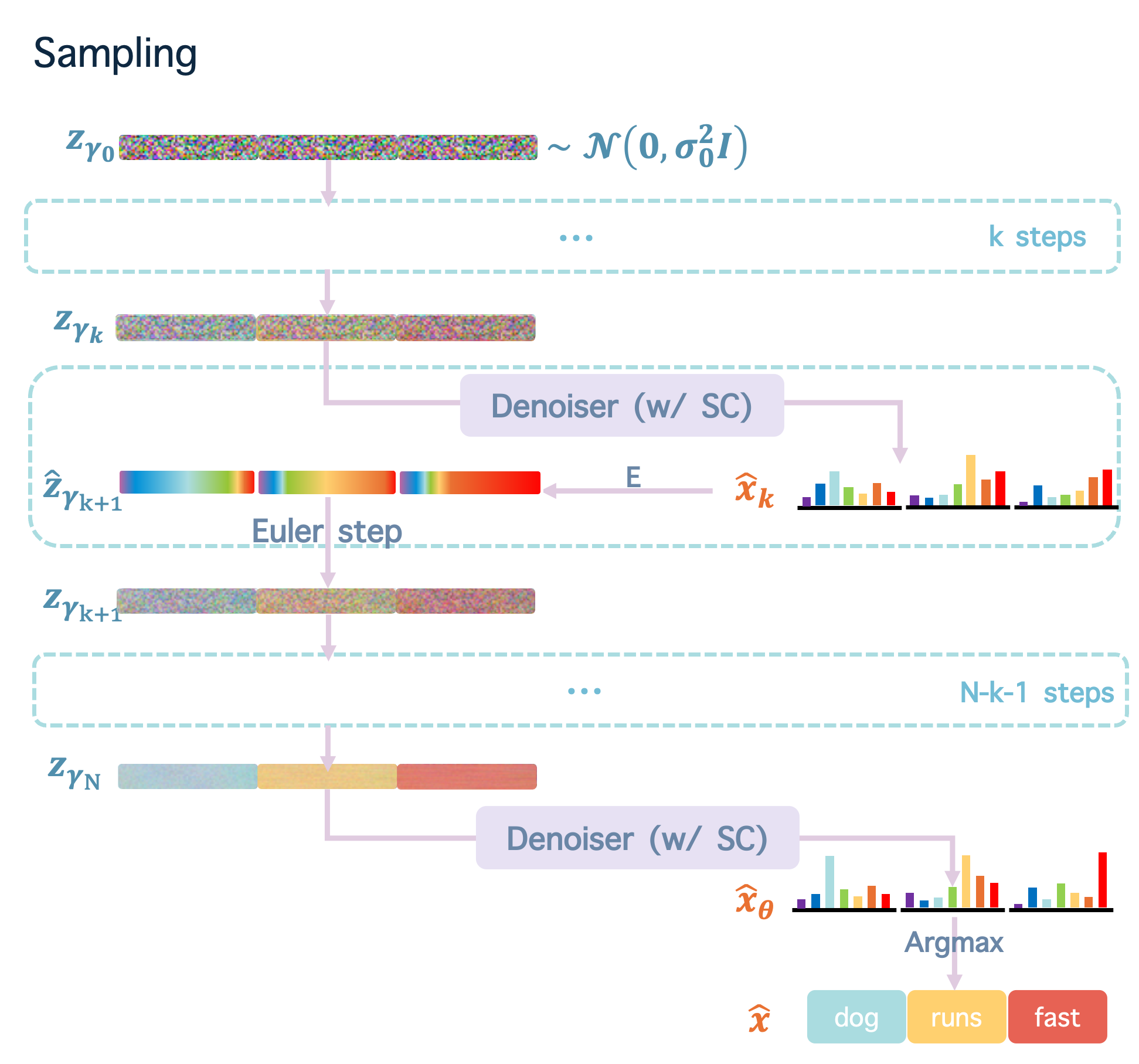

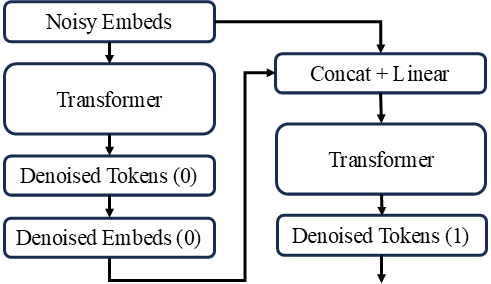

Sampling With a trained velocity function $\mathbf{v}_\theta(\mathbf{z}_t, t)$, we sample text examples by a two-stage process: First, we generate clean text embedding $z_{\gamma_N}$ by progressively denoising a sampled Gaussian prior $z_{\gamma_0}$ as standard Flow Matching; Second, we apply the discrete token predictor $\hat{\mathbf{x}}_\theta(\mathbf{z}_t, t)$ (Denoiser in the figure, which is part of $\mathbf{v}_\theta(\mathbf{z}_t, t)$ as described in Training) to the clean text embedding $z_{\gamma_N}$ to obtain the final probability distribution $\hat{x}_\theta$ and sample the token $\hat{x}$ from this distribution via Argmax.

Noise scheduling

TL; DR: Good noise schedules for text focus on highly noisy districts, substantially different from those for images. Choosing such noise schedules correctly is the key of tuning continuous diffusion on text.

The problem with standard schedulers

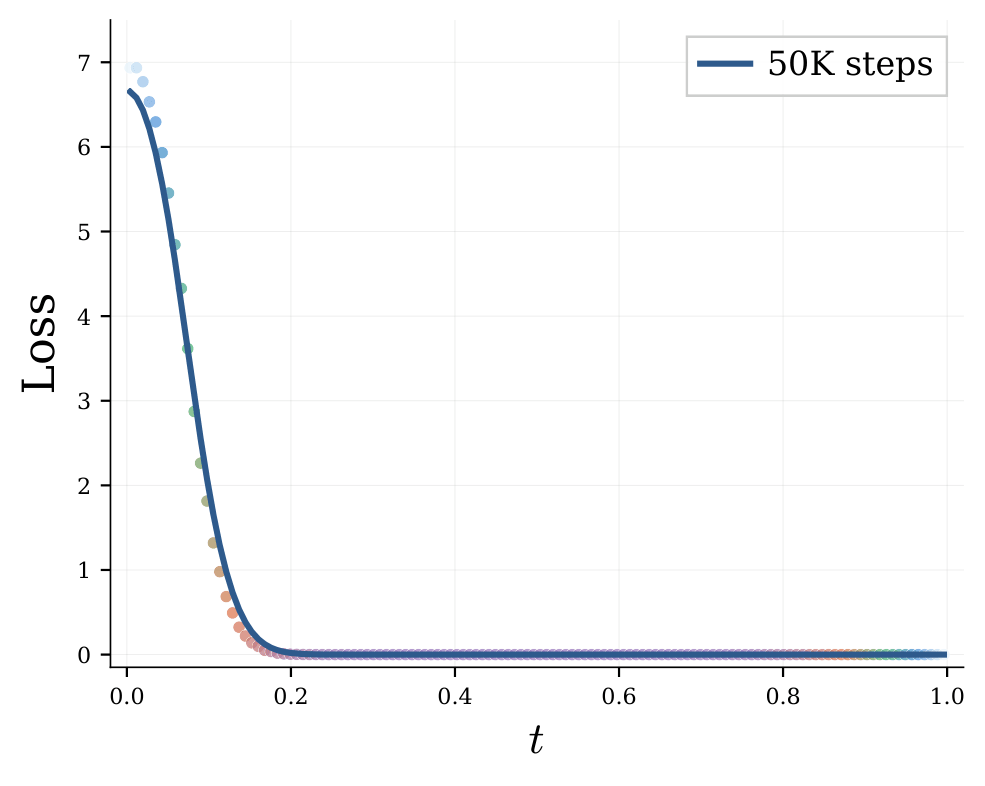

Training continuous diffusion requires choosing how to schedule the noise level \(\sigma_t\) (and signal \(\alpha_t\)) across the diffusion time \(t \in [0, 1]\). For image models, popular design choices place training emphasis around intermediate noise levels, where the visual signal-to-noise ratio is moderate (around \([0.5, 2]\)). This heuristic works because the image loss profile fairly well spreads out across \(t\).

Text embeddings, however, tell a very different story. When training with a naive uniform \(t\) schedule, we observe that our cross-entropy loss is nearly zero for \(t \in [0.2, 1.0]\). This means that the model will add nothing to the generated data during this interval and that all meaningful information is generated in the first \(20\%\) of the diffusion path. This is intuitive because different text embeddings are far more isolated to each other compared to images. Standard image schedulers therefore waste more than half of their training and sampling steps on noise levels that carry no useful information.

We then notice that our loss changes dramatically mainly on highly noisy districts. Hence, instead of conditioning the network on \(t\), we introduce the logarithmic noise-to-signal ratio (logNSR)

\[\gamma_t = \log\!\left(\frac{\sigma_t^2}{\alpha_t^2}\right),\]as our time conditioning, which strictly maps \(t \in [0, 1]\) to \(\gamma \in (+\infty, -\infty)\). Pure noise corresponds to \(\gamma \to +\infty\); perfectly recovered data to \(\gamma \to -\infty\). Restricting to variance-preserving (VP) paths where \(\alpha_\gamma^2 + \sigma_\gamma^2 = 1\), the noisy embedding at a given level is simply:

\[\mathbf{z}_\gamma = \sqrt{\sigma(-\gamma)}\, \mathbf{z} + \sqrt{\sigma(\gamma)}\, \boldsymbol{\epsilon}, \quad \boldsymbol{\epsilon} \sim \mathcal{N}(\mathbf{0}, \mathbf{I}),\]where \(\sigma(\cdot)\) is the sigmoid function. The main advantage of conditioning on \(\gamma\) is: when NSR doubles, the time conditioning only shifts by \(1\), which smoothens the curve of time conditioning when NSR dramatically increases. This allows us to allocate more resolution on highly noisy districts, which concentrates the main change of our training loss.

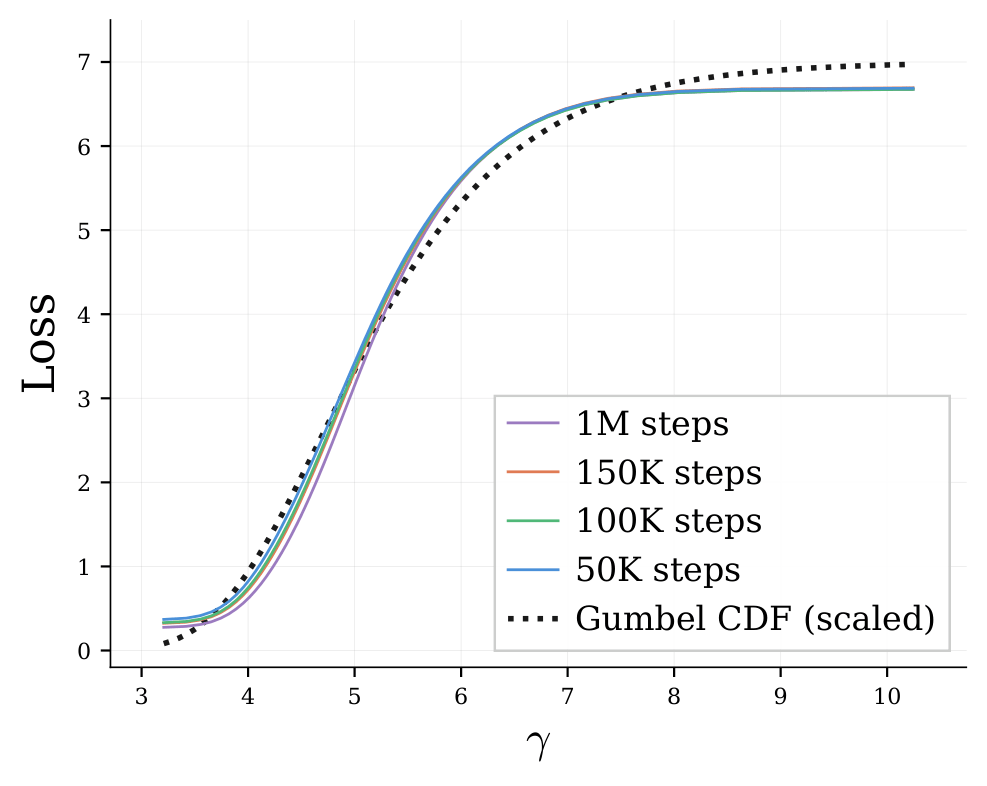

Matching the schedule to information gain

Plotting the per-noise-level CE loss \(\mathcal{L}_\gamma = \frac{1}{L}\sum_i \mathbb{E}[-\log q_\theta^{(i)}(x^{(i)} \mid \mathbf{z}_\gamma, \gamma)]\) over \(\gamma\) reveals something striking: the loss curve is remarkably stable across training stages (from 50k to 1M steps). This is not coincidence: By the standard information-theoretic decomposition,

\[\mathbb{E}_{x^{(i)} \sim p}\left[-\log q_\theta^{(i)}(x^{(i)})\right] = \mathrm{KL}(p \,\|\, q_\theta^{(i)}) + H(p),\]the KL term quickly converges to zero as the model learns the posterior, leaving the loss dominated by the irreducible posterior entropy \(H(x^{(i)} \mid \mathbf{z}_\gamma)\). In other words, the loss profile is a fingerprint of the information content of each noise level — it is a property of the data, not the model. In other words, after the very early stage of our training, we have \(\mathcal{L}_\gamma\approx H_\gamma\).

This means that reversing the path of diffusion is actually a process of gaining information for the generated data progressively, which motivates a heuristic principle: to allocate training steps proportionally to the information gained per unit of \(\gamma\), i.e., proportionally to \(H'_\gamma = \frac{\partial}{\partial \gamma} H_\gamma\). Regions where the model learns more about tokens per noise level should receive both more training steps and more ODE sampling steps. This becomes our principle to sample \(\gamma\) during training and inference.

A Gumbel proposal for uniform-information scheduling

To apply our principle, we reuse the curve of loss-\(\gamma\) above (now we know it is exactly the curve of \(H_\gamma\)-\(\gamma\)). Comparing \(H'_\gamma\) from checkpoints at 50k, 100k, and 150k steps, we find that the derivative is strictly unimodal and positively skewed. Surprisingly, we discover that a Gumbel distribution provides an excellent match for the \(H_\gamma\)-\(\gamma\) distribution:

\[H_\gamma \propto H(+\infty) \cdot \exp\!\left(-\exp\!\left(-\frac{\gamma - P_\mu}{P_\beta}\right)\right)\]Hence, we parameterize \(H_\gamma\)-\(\gamma\) by \(H'_\gamma \sim \mathrm{Gumbel}(\gamma; P_\mu, P_\beta)\). In our experiments, we set \(P_\mu\), \(P_\beta\), and \(H(+\infty)\) as trainable parameters to dynamically fit the ground truth \(H_\gamma\)-\(\gamma\) curve.

To summarize: During training, we sample \(\gamma\) from this Gumbel distribution and perturb data accordingly; During ODE sampling, we schedule the integration steps according to the same distribution — concentrating solver steps precisely where the per unit information gain is rich.

The impact is dramatic: ablations show this Gumbel proposal alone reduces Gen. PPL (↓, measured by GPT-2-Large) of LangFlow by a factor of 7× (from ~1000 to ~150). This design choice is the key milestone that allows continuous diffusion to match its sample quality to that of discrete diffusion.

Self-conditioning

TL; DR: Self-conditioning works differently on continuous and discrete diffusion. For discrete, it reduces generative perplexity at the cost of higher perplexity. For continuous, it significantly decreases both perplexity and generative perplexity.

Self-conditioning

Because self-conditioning is specifically designed to reduce step-wise error accumulation, it was widely assumed to have no effect on perplexity (PPL) — a metric computed over the full data distribution rather than along particular generation trajectories. For this reason, prior works have commonly disabled self-conditioning when comparing discrete and continuous diffusion by PPL. For example, in the paper of DUO, DUO achieves a PPL of 43.0 at 100k training steps on LM1B, against Plaid’s 89.9 (with self-conditioning disabled). This gap has then been taken as evidence of continuous diffusion’s fundamental inferiority.

However, we find that such evaluation protocol is unfair to continuous diffusion, because self-conditioning actually has a dramatically different effect on continuous diffusion compared to that on discrete diffusion. Turning on and off self-conditioning for MDLM (discrete) and LangFlow (our continuous), and measuring both their PPL and Gen. PPL reveals a fundamental asymmetry:

| Model | Self-Cond. | Gen. PPL ↓ | PPL ↓ |

|---|---|---|---|

| MDLM | ✗ | 103.9 | 31.0 |

| MDLM | ✓ | 94.9 (-9.0) | 32.7 (+1.7) |

| LangFlow | ✗ | 154.2 | 49.0 |

| LangFlow | ✓ | 81.5 (-72.7) | 30.0 (-19.0) |

For discrete diffusion (MDLM), self-conditioning improves Gen. PPL — confirming the expected reduction in sampling-time error accumulation — but slightly degrades PPL. This trade-off is well-known and explains why self-conditioning is disabled in PPL-focused comparisons of discrete diffusion. For continuous diffusion (LangFlow), the picture is entirely different: self-conditioning significantly improves both Gen. PPL and PPL, which is enough to close the gap between the PPL of continuous and that of discrete diffusion.

Evaluating continuous diffusion by an ODE-based bound of negative log-likelihood

TL; DR: The likelihood bound of Flow Matching (ODE) provides tight estimation for NLL in continuous diffusion language models.

Evaluating the likelihood (corresponding to PPL) of a language model is as important as generating good samples. For autoregressive models, exact log-likelihood is computed token by token. For discrete diffusion, a variational lower bound derived from the stochastic forward process (the negative evidence lower bound, NELBO) is the standard metric. But for continuous diffusion with ODE sampling, the SDE-derived bound is structurally mismatched — the ODE and SDE traverse different probability pathways over the same data.

LangFlow adopts a deterministic generative process via probability flow ODE. We therefore derive a likelihood bound tailored specifically to this ODE trajectory, following Flow Matching

where \(\mathbf{z}_a \sim \mathcal{N}(\alpha_a \mathcal{E}^\top \mathbf{x},\, \sigma_a^2 \mathbf{I})\), \(\mathbf{z}_\gamma\) for \(a \le \gamma \le b\) is the reverse ODE trajectory given \(\mathbf{z}_a\), and \(\nabla \cdot \hat{\mathbf{z}}_\theta\) denotes the divergence of the denoiser with respect to \(\mathbf{z}_\gamma\).

There are four terms to unpack here. The first two account for the log-probability of the initial noisy state under the Gaussian prior. The third is the token-level cross-entropy at the end of the reverse trajectory — essentially measuring how well the model recovers the original tokens from near-clean embeddings. The fourth term captures the volume change induced by the ODE flow, analogous to the change-of-variables formula in normalizing flows; it accounts for how the deterministic flow compresses or expands probability mass along its trajectory.

This bound is appropriate in the sense that it is derived directly from the ODE dynamics rather than from a stochastic surrogate. It closes the evaluation gap between continuous and discrete diffusion language models, enabling apples-to-apples comparison of their data likelihoods.

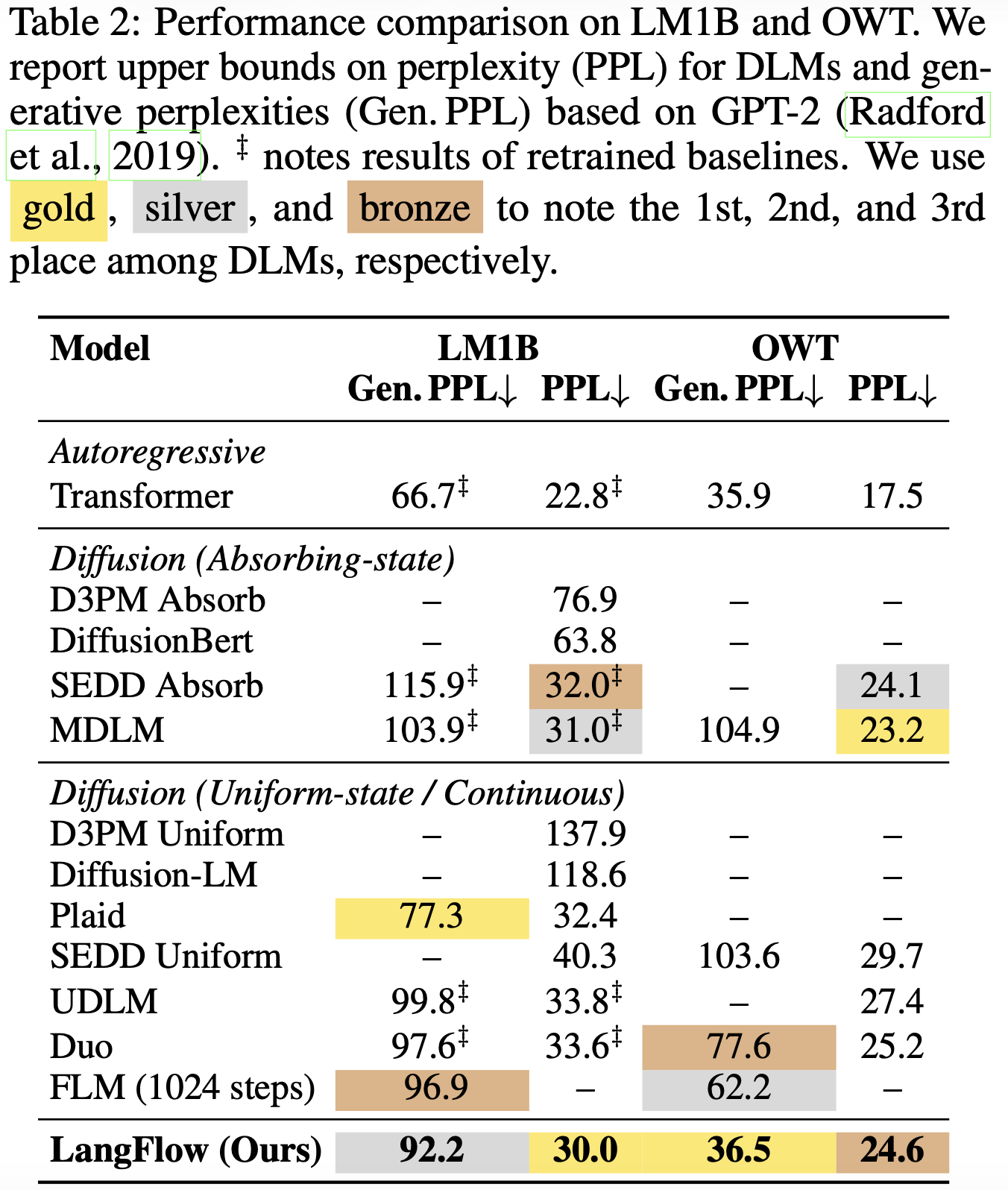

Experiments: Continuous diffusion rivals discrete in language modeling

TL; DR: Putting our designs together helps continuous diffusion to match or outperform discrete diffusion on LM1B and OWT, measured by PPL and Gen PPL. Our model also achieves advanced zero-shot transfer among all diffusion models.

We evaluate LangFlow on two standard benchmarks: LM1B, the most widely-used sentence-level language modeling benchmark, and OpenWebText (OWT), the language corpus similar to the dataset used to train GPT-2.

Basically we have two experimental settings:

-

In language modeling, we train models on the training split of LM1B and OWT and report their perplexity (PPL) and generative perplexity (Gen. PPL) on the corresponding validation split;

-

In zero-shot transfer, we report the zero-shot perplexity on several hold-out text datasets.

In the above experiments, all models are 130M-parameter diffusion transformers (DiT) trained for 1M steps, following the setup of existing literature. We report the upper bound of the perplexity (PPL) of diffusion, and the generative perplexity (Gen. PPL) measured by GPT-2 Large on 1024 samples. For LM1B, the sample length is 128, with NFE=128. For OWT, the sample length is 1024, with NFE=1024.

Language Modeling

On LM1B, LangFlow achieves a Gen. PPL of 81.5, outperforming the best discrete diffusion baseline (DUO at 97.6) by more than 15 points. In terms of dataset PPL, LangFlow (30.0) beats all discrete diffusion. On OWT, LangFlow (24.6) also yields perplexity only with a gap of 1.4 compared to MDLM (23.2). This is the first time that continuous diffusion rivals discrete diffusion in standard language modeling benchmarks.

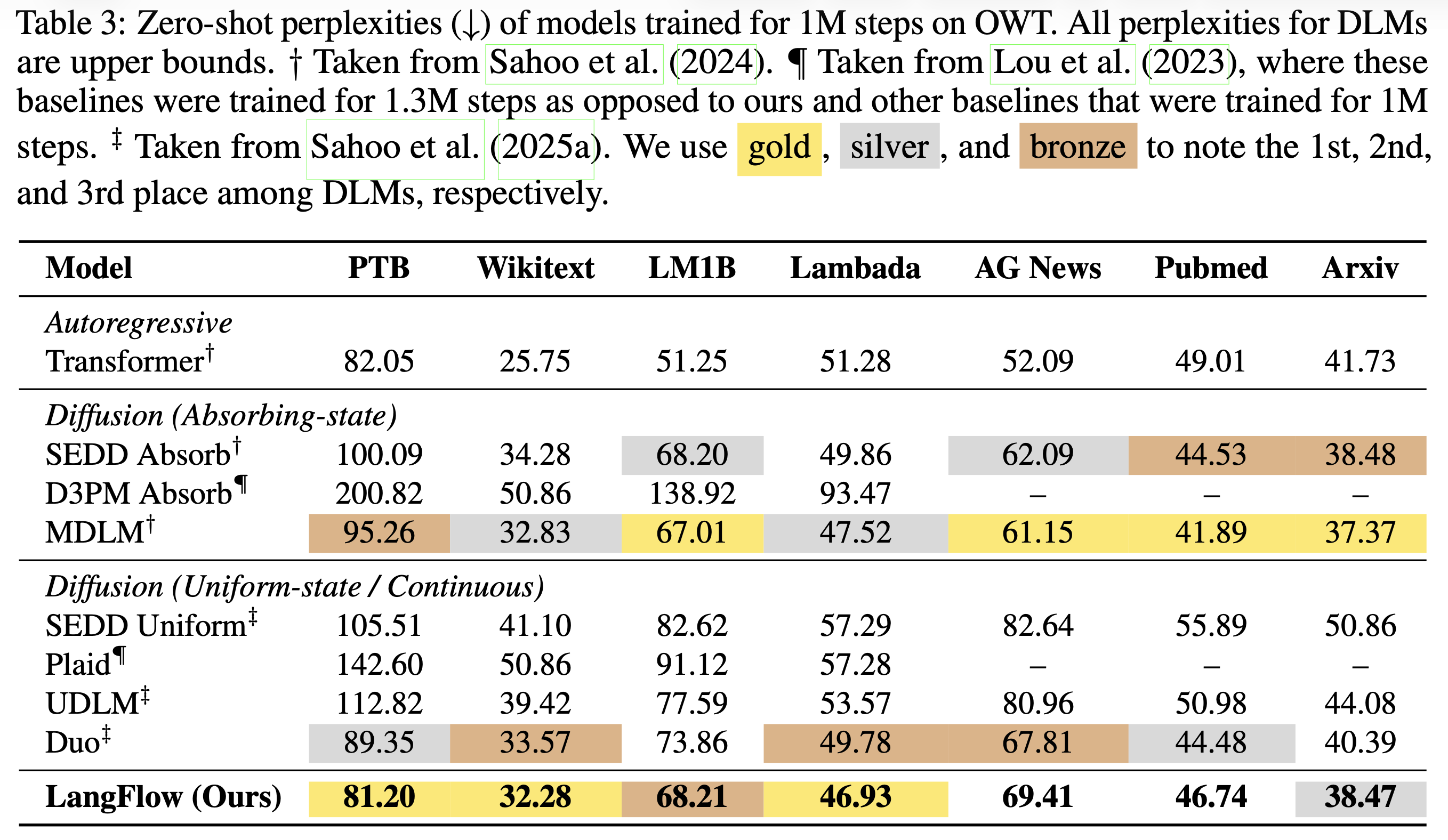

Zero-shot transfer

Across 7 zero-shot benchmarks, LangFlow beats the autoregressive baseline on 4 out of 7 (PTB, Lambada, PubMed, Arxiv) and MDLM on 3 out of 7 (PTB, Wikitext, Lambada). Overall, LangFlow performs better in zero-shot transfer compared to existing continuous and uniform diffusion methods.

Discussion: Why continuous diffusion language models?

TL;DR: Diffusion should complement autoregressive models in controllability and multimodality rather than replace them. Continuous diffusion is well suited to this goal.

In previous sections, we showed that continuous diffusion can empirically rival discrete diffusion in language modeling. However, a substantial performance gap between diffusion and autoregressive methods remains. Experimental results at scale consistently indicate that diffusion does not outperform autoregressive models overall in language modeling. This brings us back to the question raised at the beginning: why should we pursue diffusion language models?

Sampling efficiency alone is not a sufficiently strong justification. While much of the literature presents it as a potential advantage, recent large-scale comparisons

Given that autoregressive Transformers have become the gold standard for both effectiveness and efficiency in language modeling, a more practical goal for diffusion language models is to complement them rather than replace them. From this perspective, we can identify two key limitations of autoregressive models where diffusion may offer distinct advantages:

1) Controllability. Autoregressive models are inherently limited in controllability because they do not leverage latent variables during sampling. In contrast, diffusion models transform noisy latent variables into data samples through editable trajectories. This creates opportunities for controllable generation, such as guidance mechanisms. Diffusion models can therefore serve as controllable drafters in speculative decoding

2) Multimodality. The physical world consists of continuous signals—images, video, audio, and actions. Representing these signals as discrete tokens in autoregressive models is not a natural approach, particularly when multiple modalities interact. Because diffusion operates in continuous spaces, it is a promising foundation for multimodal paradigms grounded in the physical world, such as vision-centric world models.

Admittedly, without large-scale empirical validation, these hypotheses about the potential of diffusion language models remain unconfirmed. Nevertheless, we believe the future of language modeling lies not in a single unified model, but in a system of diverse models that each leverage their own strengths in a coordinated framework. From this perspective, the goal of developing diffusion language models should not be to chase performance by mimicking autoregressive methods at the cost of losing their defining characteristics. Instead, we should optimize diffusion while preserving what makes it unique, and then identify its role within the broader ecosystem of language models. In other words, we should no longer force diffusion into the autoregressive paradigm. Let diffusion be diffusion first.

This leads to our answer to why we work on continuous diffusion language models: they may not be the most performant variant, but they best preserve the defining properties of diffusion itself—and that is precisely why diffusion language models are worth pursuing.